• Life

Study Notes for #60DaysofCloud – Compute Concepts

Throughout October, I took part in the #30DaysofCloud challenge. After being inspired by the inspiring figure, Temi Olukoko, I decided to try and make a consistent habit in committing to a bit of learning every day. Although I did miss some days – especially on weekends where I didn’t want to even think about AWS – I still got consistent study in which I am quite proud of. 😄

I decided to push the challenge onto 60 days (if it all goes well, maybe heading to 90 then 10 😉) For most of this challenge, I’ve been going through Stephane’s Udemy course on passing the Solutions Architect Associate exam. Back in March, I actually sat the SAA-CO1 and unfortunately didn’t pass the first time (I missed the passing grade by about 2%!)

But I’m back now to give it another go! I don’t know if I’ll be attempting it this year, but I have spent the last month or so focusing on learning the content from the course. If anything, it has helped me with my day job and future career in cloud so it is always worth doing even if I don’t sit the exam this year.

I was inspired to write this post as I’ve just completed the Udemy course and wanted to do a little bit of revision on the things that I’ve learned so far. My key focus right now is getting back into the zone of exam papers by committing to completing papers every few days whilst going back through the content and doing any extra reading where needed.

Throughout my #30DaysofCloud journey, I actually created short videos on YouTube going through my notes and doing some exam questions too. If you’re interested in that sort of thing, you can check them out in this playlist.

For today’s post, I wanted to go through some of the concepts I learned on some compute services. As I’m writing this post I hope to count it towards as revision! 😛 Who knows, you might learn something as well.

EC2 – Elastic Compute Cloud

This service compasses four different parts:

- Launching virtual machines – Elastic Compute Cloud (EC2)

- Storing data on virtual drives – Elastic Block Store (EBS)

- Distributing load across machines – Elastic Load Balancing (ELB)

- Scaling the services using an auto-scaling group (ASG)

Also included in this section:

- Creating Amazon Machine Images (AMI)

- Using security groups which are firewalls around your instance (SG)

- Elastic IPs – Public, Private

- Elastic Network Interface (ENI)

Features of EC2

You can get into your EC2 instance via SSH or EC2 instance connect. SSH is when you use your command line to control a remote server (in this case, a virtual machine in AWS) For this to work, you need to use your .pem file. If you encounter errors such as “unprotected private key file” this indicates bad permissions and so requires a CHMOD to change its permissions. EC2 instance connect is a browser-based EC2 connection that only works with Amazon Linux 2 machines.

EC2 User Data: you can add scripts to your EC2 instance before launching so that it automatically allows you to boot tasks such as updating software, downloading common files. This script runs with the root user.

EC2 Instance Launch Types

- On-Demand Instances: great for short, unpredictable workloads.

- Reserved: great for when you know about a specific workload

- Spot Instances: great for short workloads, super cheap (cheaper than on-demand.)

- You define a max spot price. If an instance is less than that max number then you will lose it (after a 2 minute grace period.)

- Spot block: blocking an instance for a specified timeframe without any interruptions.

- Spot fleets: a set of spot instances that will meet the target capacity. You can set this up based on the lowest price, diversity of instances, capacity.

- Dedicated Instances: great for when you need instances that are just for you.

- Dedicated Hosts: great for when you need full control of instance placement and sockets. Mostly used for licensing, but is the most expensive.

EC2 instance types

- R – When you need a lot of RAM. E.g. in-memory caches.

- C – When you need good CPU. E.g. compute, databases.

- M – e.g. general web applications. Think “medium”/balanced.

- I – When you need good I/O e.g. databases

- G – When you need good GPU e.g. video rendering, machine learning

- T2/T3 – e.g. general web applications

Concept: “Burst” and “Burst credits”

- When you launch an EC2 instance, you share physical CPU with other instances.

- Every instance has a set amount of “burst” or “burst credits”

- When an instance goes over the burst credits i.e. bursts too much, then the performance of the CPU worsens.

- If the machine stops bursting, it regains credits again over time.

- It might be wise to move to a non-burstable instance (e.g. C) if you keep on bursting.

- T2/T3 are burstable instance types.

- T2/T3 Unlimited Bursts allows you to burst as much as you want, without losing performance but you do pay more.

EC2 Placement Groups

You can place your EC2 instances in specific ways using placement groups.

- Cluster: clusters instances together in the same hardware (rack), same Availability Zone (AZ) but low-latency, great for high network and performance. Bad for disaster recovery.

- Spread: 7 instances are spread across different hardware, different AZ. Only 7 instances per spread placement group. Great for high availability.

- Partition: instances are put in different partitions (racks of hardware) Only 7 partitions per AZ, up to 100s of EC2 instances. Mostly used for Kafka, HDFS, HBASE, CASSANDRA.

EC2 Hibernate

When you want to hibernate your instance instead of stopping/terminating to preserve the in-memory state. “Freezing” the state. It works by writing to a root EBS volume (which needs to be encrypted for this to work!)

This feature only works for instance types: C, M and R. Instances cannot be hibernated for more than 60 days!

Amazon Machine Images

An AMI is an image that is used for our EC2 instances. They are the foundation of what OS you use, pre-installed packages that you need, configurations you’ve specifically set up. This helps for faster boot times and deploys of a “template” for instances.

They are region-specific, but can be copied to other regions. If you share your AMI with others it is still “yours” but if they copy it to other regions, it becomes theirs.

AMIs live in S3 but you can’t see them on the S3 console.

Security Groups

Security Groups are the firewalls of our EC2 instances, they are needed to control traffic that goes in and out of our instances. Instances can have multiple SGs.

- Inbound: traffic going into the machine e.g. ssh

- Outbound: traffic going out of the machine e.g. opening your website to the world.

By default, all inbound tragic is blocked and all outbound traffic is authorised. SGs can authorise other SGs too. For example EC2-1 has SG-1 that allows SG-2 in EC2-2 communicate with it.

Private, Public, Elastic IPs

You have IPV4 and IPV6 (longer, and more for IOT.)

- Private IP: only accessible to the private network.

- Public IP: accessible over the internet.

- Elastic IP: These are static/fixed IPs. You only have 5 Elastic IPs in your account, but you can ask AWS to increase this. When you assign an instance to an Elastic IP, you keep that IP for that instance even if you stop/start your instance. If Elastic IPs are not associated with any instance, you are charged for it.

Elastic Network Interfaces (ENI)

ENIs are virtual network cards that give instances access to a network. They have:

- Primary private IPV4

- Or one or more secondary IPV4

- One elastic IP

- One public IPV4

- One or more SGs

- A MAC address

- They are bound to specific AZ

Elastic Load Balancing (ELB)

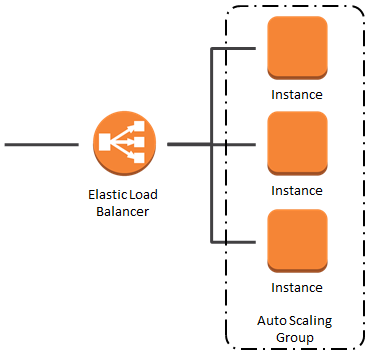

Load balancing is when you spread the “load” across instances. ELB does this for our EC2 instances. If you put a ELB in front of a instance, the users hit the ELB. It is a single point of access for users. Some key features:

- HTTPS can be enforced via security groups. ELBs have port 80 (HTTP) and port 443 (HTTPS) open to the internet.

- Security groups on instances need to reference the ELBs SGs specifically so that users only go through the ELB.

- Health checks on EC2 instances can be performed – to check if instances are healthy/unhealthy so that it can route traffic to a healthy instance

- ELB stickiness with cookies – allows users to be redirected to the same instance behind the load balancer every single time to not lose a session for example. But this can lead to an imbalance of load.

- Highly available so can spread across availability zones (Cross-Zone Balancing) This is always on for ALBs, but disabled by default for NLB and CLB.

- It can separate public and private traffic

There are 3 different types of ELBs:

- Classic (CLB) – Version 1 of ELB. HTTP, HTTPS, TCP. Fixed hostname.

- Network (NLB) – TCP, TLS (secure TCP), UDP. High performance. You get one static IP per AZ! This is good for when you want specific IPs.

- Application (ALB) – HTTP, HTTPS, WebSocket. Used for applications in different target groups (and have multiple routes (i.e. URLs, query-string, different headers), a single ALB can be set up to route different applications using Rules. Great for microservices. Also has a port mapping feature to redirect to a dynamic port in ECS.

SSL/TLS + Load Balancing

- Secure Sockets Layer (SSL) – needed for inflight encryption

- TLS (Transport Layer Security) – newer to SSL

- These certs are required for encryption inflight

- Server Name Indication (SNI) is used when you load multiple SSL certs onto one web server. SNI only works for NLB or ALB or CloudFront.

Auto-scaling Groups (ASG)

ASGs are needed to scale in and scale out instances (i.e. increase and decrease based on the load = user traffic)

There are three settings:

- Desired capacity: the amount currently available

- Minimum size: the minimum amount of instances you want

- Maximum: the maximum amount of instances you want and what it will scale if load increases.

CloudWatch alarms can be set up so that when a certain metric goes up or down, it can instruct ASGs to increase/decrease instances as well. ASGs are also used when unhealthy instances are terminated, and need replacing.

ASGs uses launch templates to launch the EC2 instances. These templates are configurations that are used when instances are launched.

ASGs are free, you only pay for the underlying resources that are launched.

The Default Termination Policy is a policy that states that the oldest launch confirmations should be deleted first AND the AZ with the most instances removed first.

Lifecycle Hooks are extra steps that are taken when you scale in and out.

ASG Scaling policies

- Target Tracking Scaling: a set target metric e.g. I want CPU to stay at 40% otherwise if it goes over, increases instances.

- Simple/Step Scaling: when a CloudWatch metric is triggered

- Scheduled Actions: Scale based on known usage patterns e.g. at a certain time, increase/decrease instances

- Scaling Cooldowns: This is a period when no instances are launched or terminated. This ensures that previous scaling activity takes effect first.

Thank you so much for reading! I hope that this was somewhat useful to others currently studying some AWS. Watch out for more learning and study notes in the coming weeks as I continue my journey to becoming AWS Certified x 2 ☺️

↪ Want to read more posts like this? Head over to the Vault.

↪ Do you have any questions or comments? Drop me a line on Bluesky, or send me an email.

↑ Back to the top